|

At the temple of Apollo at Delphi, the residence of the fabled Oracle, a golden “E” hung in the entranceway and no one knew what it meant. Originally, it was a large wooden “E,” and over the centuries, various potentates replaced it with increasingly expensive materials. Caesar Augustus donated a solid gold “E.”

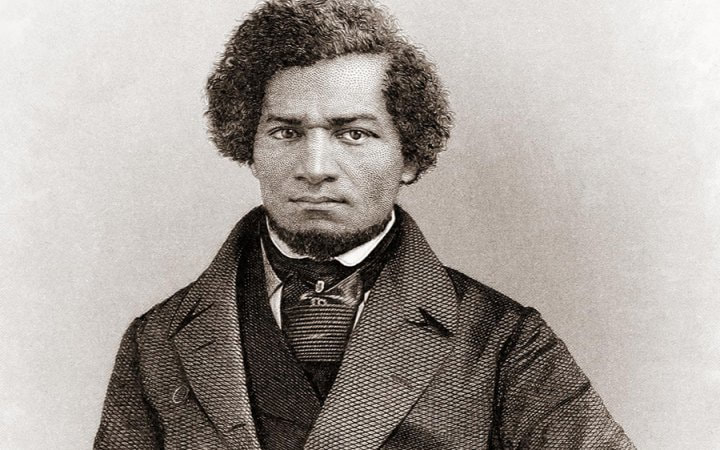

Even ancient scholars did not know the meaning. Plutarch, the famous Greco-Roman author, became a priest at Delphi and wrote an essay with an overview of five theories. Other scholars, over the centuries, have also put forth their theories. So it might be a bit rash of me, a high school history teacher in 2018, to reject their solutions and propose my own. The main issue with the previous solutions is the translation and interpretation of the third maxim, or warning. Visitors to the temple were greeted by three warnings (which were carved on a pillar, into a wall, or hanging in the entranceway, depending on the historical source). The first two warnings are famous: “Know Thyself!” “Nothing in Excess!” These are translated in various ways, but the underlying meaning is clear. However, the third warning has two translations that do not seem consistent: “Make a pledge and mischief is nigh!” or “Give surety, get ruin!” Almost every available source has some version of these two; the sources then wax poetic. What is missing from all of these translations is cultural context: Before the reforms of Solon, the renowned Athenian leader, there was a system in which you could borrow money and offer yourself as collateral. Failure to repay the loan would result in your enslavement. The warning is thus very practical and philosophical (like the first two). If you are so desperate as to offer your freedom as collateral (pledge or surety), you are guaranteed to become a slave. Or more laconically, “Bet your freedom, guarantee your slavery!” It is a bracing formulation of the value of freedom, so often discussed by ancient philosophers. So how does this connect to the golden “E?” A Socratic dialogue gives a clue: In Plato’s Charmides, the character, Critias, that Socrates is questioning, makes an interesting observation: “‘Know thyself!’ and ‘Be Temperate!’ are the same…” (“Be temperate” is an alternative translation of “Nothing in Excess.”) They go on to discuss the idea that self-knowledge leads to temperance, or the avoidance of excess. And there, the mystery is broken wide open: All three Delphic warnings are the same. What would cause someone to offer themselves as collateral? Excess. How do you know yourself? Observing yourself when you are free. How do you avoid excess? Knowing yourself. And on and on. Or more pithily: self-knowledge leads to self-control which leads to freedom which leads to self-knowledge. The “E” is not an “E” but an illustration of the three warnings as three horizontal lines and the vital connection between the three as the vertical line. “Knowledge makes a man unfit to be a slave.” ― Frederick Douglass Tom Wolfe, the famous author of The Right Stuff and Bonfire of the Vanities, was discussing the meaning of the “liberal arts.” He relates a fascinating piece of history: the liberal arts (or ars liberalis) come from ancient Rome. The Romans allowed their slaves to be educated, but only in specific, useful, subjects, like engineering and math. They were forbidden from learning history and philosophy, because that would make them rebellious, dangerous, and free. It is fairly amazing that the very same STEM (science, technology, engineering, and math) subjects that receive gobs of money and acres of media coverage are the exact ones to which Greek slaves in Rome were limited. ,“No man can put a chain about the ankle of his fellow man without at last finding the other end fastened about his own neck.” - Frederick Douglass Some historians have a theory about recent history: technological progress was caused by the freeing of slaves. Rome reached a technological plateau; they kept a massive number of slaves. It was not until the Enlightenment, with the freeing of slaves, that technology really exploded. The lack of cheap labor caused people to look for labor saving devices. The historians point to several instances, in places with a density of slaves, in which there was a resistance to the implementation of modern technology. The creepy part of the theory is that it gives you a window into slave owning. You treat your technology, according to the theory, exactly like a slave: nice, perhaps, when it is working well, and with a fury when it hesitates. And you would be loath to give it up: would you go without your computer? (Would you free your iPhone?) “I have observed this in my experience of slavery - that whenever my condition was improved, instead of increasing my contentment, it only increased my desire to be free…” ― Frederick Douglass The artificial intelligence enthusiasts are not mentioning a clear, inescapable problem with their technological ambitions: they wish to create slaves. Artificial intelligence has had many definitions, but the recent proponents are looking to create sentient computers; sentient, meaning self-aware and capable of complex thought. These conscious computers, if possible, will solve the world’s problems, make our lives easier, treat our diseases, clean our streets, prepare our food, and care for our children. Herein lies the paradox: in order for computers to be able to do these complex tasks, they must be self-aware. If they are not self-aware, they probably will not be very useful. But if the computers are self-aware, two simple questions present themselves: what if they do not want to do that work? What if they refuse? “Those who profess to favor freedom, and deprecate agitation, are men who want crops without plowing up the ground…” ― Frederick Douglass Our visions of the future, which underlie all of our plans and policies, are defined by what they lack: a lack of any work, a lack of messy politics and a lack of business rivalries. Look at any utopian vision from the mid 1800's onward: clean, peaceful, and technocratic. Dystopian visions make the same point in the opposite direction: no leisure, chaotic cities, and corruption. These visions are the result of a one-sidedness in our thinking; we are only planning for future consumption, and therefore think about the future like we think about our retirements. But humans are happiest when facing the adventure and glory of productive challenges. There needs to be a revolution in how we imagine the future; it needs a vision of work. “I am certain that there is nothing good, great or desirable which man can possess in this world, that does not come by some kind of labor...”

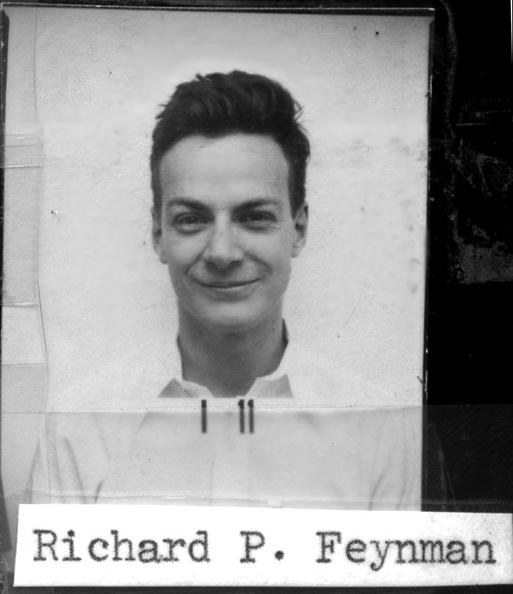

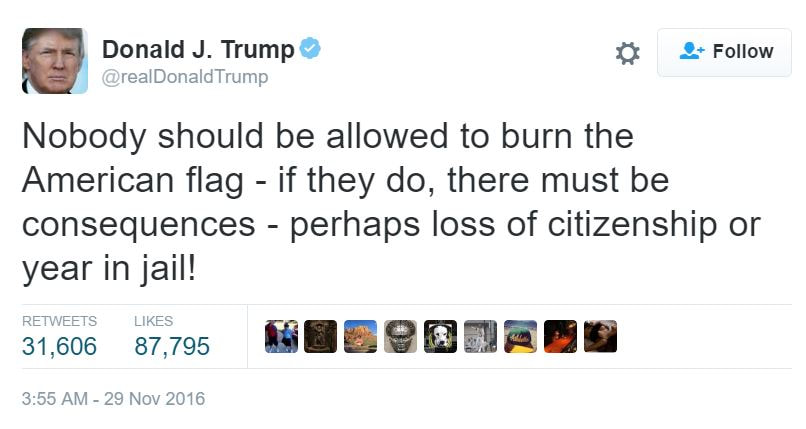

― Frederick Douglass There is a relevant linguistic paradox: the words create and produce have very similar denotations, but creativity and productivity have almost opposite connotations. The technocrats planning our futures - the economists, statisticians, engineers and policy-makers - are obsessed with productivity. They themselves are very productive human beings: first in their classes, extremely efficient, and great at eliminating redundancies. Productivity only creates a space, however, for creativity. The technology world is remarkable for its lack of aesthetic vision. Steve Jobs, of course, is the exception that proves this rule. It is ironic that our futures are being produced by people with the same backgrounds and limitations as the Greek slaves of Ancient Rome. “I will totally accept the results of this great and historic presidential election… if I win,” declared candidate Donald Trump, smirking like a trickster, mere weeks before the most contentious election in a generation. Jaw wagging commenced and lengthy think pieces were published. Was he a fool, a fighter, a fascist, or a troll? A troll? Nobel Prize winning physicist Richard Feynman, while working on the Manhattan project, found that many of the top-secret documents were not kept securely. He could have submitted this observation to a superior officer, instead, he taught himself to pick locks and crack safes. He left prank notes to physicists all over Los Alamos. (Convincing one that he had lost all of the atomic secrets to a spy.) Very quickly, better locks were installed. He was known throughout his life for his playfulness and disregard for authority. He had an interesting philosophical justification for this misbehavior: social irresponsibility. Trolling was not invented on the internet. It is interesting, a bit sad (sad!) that people have not looked deeper into history to study this phenomenon. Journalists have attempted to define the phenomenon and have come up short. Some have deemed it anonymous harassment, but the point is that it is harassment with a goal. Urban dictionary goes a bit further but still fails to get the point, saying that it is harassment with the goal of inciting anger. I would like to expand Richard Feynman’s definition: “Socially conscious provocation with goal of exposing weakness and hypocrisy.” The original troll has to be Diogenes, the self-proclaimed “Socrates gone mad” and the founder of the philosophical school, Cynicism. He made his life an example, living without possessions in a barrel in the middle of the town square. The histories are full of hilarious stories of him mocking elite Greeks, including Alexander the Great (Alexander found him staring at a pile of bones, with Diogenes explaining, “I am searching for the bones of your father but cannot distinguish them from those of a slave.") Stephen Colbert, before his recent incarnation, was a classic troll. His character, an obscenely patriotic fool who saw the world in black and white, was able to lampoon the right wing during George W. Bush’s presidency. He exposed the hypocrisy: the selective Christianity, the fake bravado, and the doublespeak. Hugh Troy was an illustrator and "practical joker” that served during WWII. One of his most famous pranks was to create a “flypaper report” that he would submit to Washington, reporting on the number of flies caught in the mess hall. The practice immediately spread to bases around the world; it remains a fantastic comment on bureaucracy. Some have claimed that modern art is an elaborate prank meant to expose the vapidity of today’s elite. There are many other suspected trolls: from flat-earthers (mocking believers of conspiracy theories) to Fred Phelps (mocking racists). OK, but how are trolls “socially conscious?” Tech companies and the military use “white hat hackers” and “red teams” to test their security. A system is not really secure until these skilled “enemies” have attempted to break in. Perhaps, we should look at trolls the same way. The current mantra on the internet is “do not feed the trolls,” meaning, do not respond to a troll’s attempted provocation. Better would be, “do not be a hypocrite.” Expose your ideas to debate, fix their weaknesses, and emerge with a more robust synthesis. In fact, a strong argument for free speech is that it makes you troll-proof. Look at how easily dictators are mocked outside their fiefdoms. Even more difficult, and beneficial, would be to look at successful examples of trolling and try to learn. (Extra points if you can learn from an example in this article; put it in the comments)

Unfortunately, with the sifting of American society and the formation of echo chambers on social media, trolling is probably in its infancy. Many successful new technology companies seem to be deliberately creating new addictions. I analyze this and propose a remedy. A student of mine was giving a presentation on his favorite hobby – playing video games – when I asked, “What was the longest game session you ever had?” I was expecting him to say “6 hours.” “One summer, when my parents were away, I started playing a game Tuesday afternoon and did not stop until Thursday evening. I mean, I ran to the bathroom a few times and got some drinks and food from the kitchen, but I was playing that whole time.” He played for well over 50 hours, and his deadpan expression and plausible details meant he was not exaggerating. Other kids said they had played all night a few times, surprised when they looked at the window and it was light out. I investigated this a bit more: there are a spate of stories, mostly in East Asia, of people dying of heart failure while playing video games in cyber cafes. The heart failure was caused by exhaustion. Some of them had been playing for 80 hours. “Video Game addiction” has been estimated to affect upwards of 9% of video game users under 18. A book, “Reality is Broken,” by Jane McGonigal, traces the path the video game industry took from being a nerd niche to being the major entertainment industry it is today. The book describes the involvement of psychologists in the industry, many who found and named “new emotions.” The book launched the now buzzy “gamification” movement. Her discussion of the importance of feedback in games is telling: “What makes Tetris so addictive [emphasis mine] is the intensity of feedback it provides.” Did the psychologists working with the gaming industry figure out how to turn gaming into an addiction? Is this to be celebrated and imitated? Evolutionary biologists have a term, “supernormal stimulus,” for any sensory input that overrides the inborn feedback mechanisms and creates a response far stronger than the evolved response. Nesting birds can be made to prefer artificial eggs to their own eggs. Junk food overrides mechanisms that regulate feelings of fullness so that consumption continues. (It has been suggested that the most supernormal food is flaming hot Cheetos: crunchy, fatty, sweet, “carby,” with an addictive spice added on.) Video games seem not simply to override the feedback mechanisms our brain has, but to actually hijack them. There is no “normal” stimulus that the video game is operating on; the gameplay itself creates a new need. Perhaps much of technology can be understood this way. The traditional view of addiction, that certain people have a personality flaw that makes them prone to it, has been rethought in recent years. The original view was shaped by a flawed experiment that showed that a certain small percentage of rats became addicted when offered cocaine. These rats, it was recently pointed out, were isolated and kept in a deprived environment. When placed in a rich environment with other rats, their rates of addiction plummeted. In humans, psychologist point to the fact that 95% of the heroin “addicts” among troops in Vietnam simply gave up the drug when they returned home to their family, friends, and a non-threatening environment. So addiction, rather than a simple flaw in brain chemistry, is about bonding. The addict substitutes drugs (or video games, or junk food, or gambling) for healthy human relationships and a rich, friendly environment. Social media can obviously exploit this need. The techniques of the gaming industry have been used to “optimize” the “user experiences” on popular social networks. The results are predictable. “For the likes” has become a cliché supported by stories that range from pathetic (people posting fake photos of themselves with imagined girlfriends or expensive cars) to horrific (a woman slowly poisoning her child and documenting his “disease” on Facebook for internet sympathy). Many of the successful technology business models resemble traditional addictive industries. For years, the tobacco industry was focused on getting young people to smoke, as it was harder to hook adults. Look at how every new social network is focused on the preteen age group, despite this group having limited spending power. And “freemium” sounds like the local drug dealer offering party favors and then charging when the users become addicts. So what should “we” do? Technology seems to be creating these new needs with every advance. With addiction being so poorly understood, it seems hopeless that the government could react to profitable businesses in any effective manner. I propose a simple solution: beauty.

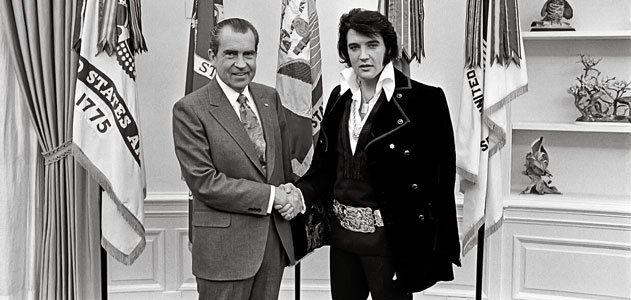

The wine drinking cultures of Southern Europe have far lower rates of alcoholism than the hard drinking cultures in Northern Europe. Alcohol is a dangerous substance that addicts many people, but treating it like a craft and obsessing over the details of it might prevent excess. (And provide a healthier social context.) Notice that there are no ultra premium cigarettes that command analogous prices to premium wines. Cigarettes are surprisingly new, having been developed at the end of the Civil War. Cigarette smoking was not widespread until the 1880s, when technological advances made mass production possible. Previous to this, tobacco was consumed in the form of cigars and pipes, with craftsmanship being an integral part of these products; use was far lower. (Note that there are very expensive cigars.) What would premium games look like? Or premium social networks? I don’t know, but they would probably involve a mix of technology and reality, rather than being isolated “infinitely scalable” products. Consider, also, the converse of consuming beautifully-crafted products: making them. It is often the creators and artists who notice and lament how much the Internet and technology distract them from their work. The wave of industrialization in the late 1800s that turned cigars into cigarettes also fomented a counter reaction, the Arts and Crafts movement. This movement, unlike later counter reactions that rejected industrial life more sharply, emphasized craftsmanship and traditional styles but wanted to incorporate the new technology. It gave birth to the Gothic Revival, Art Deco, and shaped politics and education for a few generations. The time seems ripe for a new Arts and Crafts Movement: look at all the artisanal food in hip neighborhoods of every city in the country. But instead of handmade hamburgers, let’s find a deeper and broader application of craftsmanship. It is a necessity.  The Scottish songwriter and author Momus, looking at the early Internet in 1991, quipped that Andy Warhol got it wrong: “In the future, everyone will be famous to 15 people.” “Social networking,” a phrase common in the early days of Facebook, Myspace and Friendster, has been almost entirely supplanted by the term “social media.” As late as 2008, “social networking” had twice the search volume on Google. Today, “social media” is ten times higher. Try to remember the early versions of Facebook: there was no “newsfeed.” The early newsfeed, introduced in 2006, was a series of annoying text updates about your friend’s “actions,” which included “pokes.” (Remember pokes?) Before 2006, you logged in directly to your profile; it was a fancy form of email. Now, you log in directly to a sophisticated, personalized feed optimized for video; all traditional media and advertising companies obsess over their Facebook metrics. Most of the innovation in this industry has been a race to build a better newsfeed. Twitter (created the same year as the Facebook newsfeed), Snapchat, and Instagram are all variations on the basic idea. Sure, messaging exists on all of these platforms, but the draw is the personalized newsfeed, which you can contribute to and get “likes” and new “followers.” Social networking turned into social media, which has taken fame and made it into a commodity. Wags make fun of this trend, but there is no denying its power. I went apple picking in New Jersey and you had to ride in a truck through several parking lots to get to the orchard. The truck was overcrowded and everyone was grumbling; suddenly the truck stopped next to an amazing view of an apple orchard. Everyone got out and took photos. After two minutes, we climbed right back into the truck and rode on, but the complaining ceased. We were still stuck in an overcrowded truck in a crappy parking lot in New Jersey, but we had wonderful photos to post on social media and so… we were “having a good time.” For decades, people made fun of politicians for phony “photo ops,” now everyone with a social media account searches for them. People issue press releases (aka, “status updates”) for the same reasons a president or A-List celebrity would a few decades ago – to announce where they would go on vacation, where they stand on certain issues, or changes in the status of their relationships. And the language is eerily similar: vaguely positive and hinting at complexity. The idea of fame, throughout history, was synonymous with power in all its forms. Power led to fame, and not the reverse. Great wealth led to fame. Military conquests led to fame. Previous to the invention of the photograph, art was a tool of the powerful. Sculpture, painting, and music was commissioned to amplify the powerful person’s influence. Alexander the Great brought poets and artists to his battles. The invention of photography altered the idea of fame so completely that it is hard to comprehend the previous world. When King Louis XVI was fleeing the French Revolution, he simply changed his outfit and pretended to be a wealthy merchant to slip past border guards. He had absolute power, but no one recognized him without the royal accoutrements. Try to imagine Barack Obama putting on a t-shirt and coat and trying to sneak by border guards. Fame became more “real” with the invention of the photograph, and so candidness, even if contrived, became prized by the consuming public. A whole array of techniques to cope, like the “photo op,” emerged and were adopted by powerful people. The “image” of the person became famous, and not his works, his conquests, or the institutions he built. And so it became possible to reverse the equation: build an image and you can grab fame and become powerful. A discussion of “image” would go way beyond the scope of this blog post; it would include the narrative supporting the image, the consumers that demand fame, the psychological effects of maintaining an image, and the evolution of propaganda. More relevant to this discussion is the production (and the producers) of image. Social media has reduced the cost of production to almost zero and thereby commodified fame. Anyone can, and many people do, make images, videos, and narratives and put them out in the world for anyone to see. Independent YouTube video makers, using free software on their computers, make videos that get more hits than those made by mainstream media. Some of the effects of this democratization are innocent: pictures of dogs that look like blueberry muffins getting more hits than articles on the State of the Union address. Some are much more consequential: young internet posters on both sides of the political aisle were calling the recent U.S. presidential election the “meme wars of 2016.”

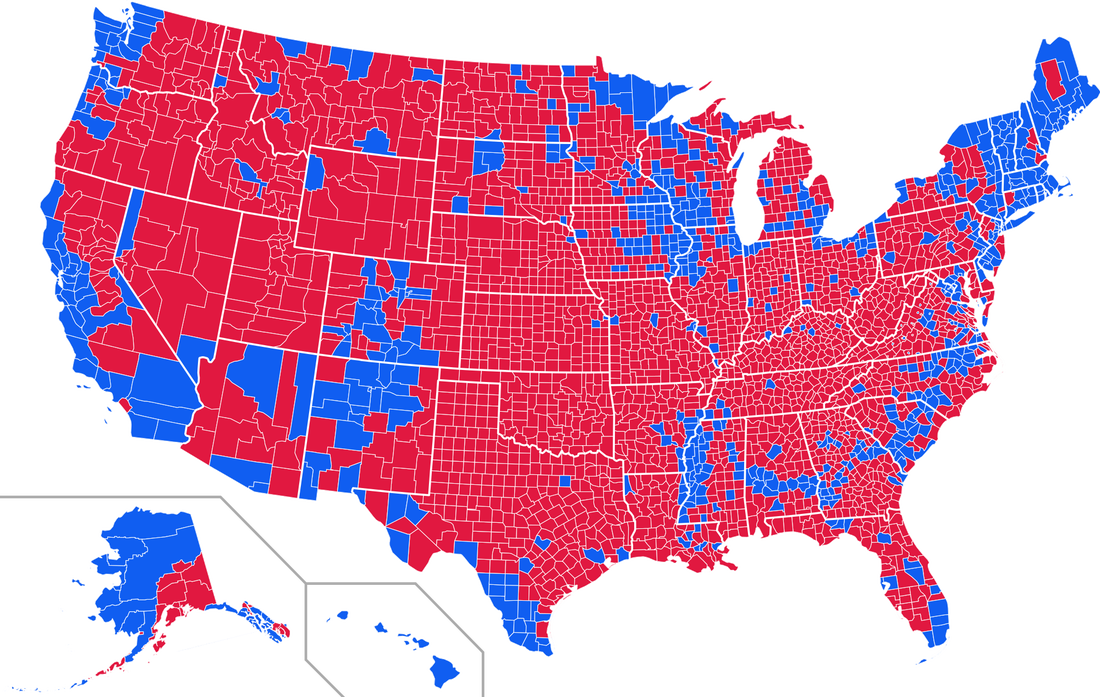

Almost 100 years ago, Walter Lippmann, a founder of the profession of public relations, and John Dewey, a philosopher and educator, debated the role of journalism in a modern democracy. Lippmann posited the idea that policy was so complex that the role of the journalist was to act as a filter between the elite policy makers and the simple public. Dewey thought of newspapers as places where dialogue and dissent would take place and policy would then be constructed from those conversations. For most of the previous century, Lippmann’s ideas (quietly) were acknowledged to be dominant. Due to social media, however, it looks like Dewey defeats Lippmann.  On Tuesday morning, I waited dutifully in line and voted here in NYC. I put in my meaningless vote for president, saw for the first time this year that Chuck Schumer was running for reelection to the Senate, and then flipped the ballot over. We were supposed to choose judges, none of whose names I recognized. The instructions: “Choose any nine.” The kicker: you were supposed to choose nine out of a possible nine! I could not help thinking, “Why the f*** did I bother showing up?” And if I was thinking that, I am sure that tons of voters on the other side of the aisle, in upstate NY, perhaps, made a right turn to their favorite bar when driving home rather than the dutiful left turn to the voting booth. I have heard various hues and cries about the Electoral College by all the people disappointed with Tuesday’s result. Clinton supporters have been sipping the weak comfort tea of “she won the popular vote.” (A result that may not hold up when all the absentee military ballots are counted.) I keep hearing people say, “If we didn’t have an Electoral College, Hillary would be president.” I will do my best Trump impression, lean in to my microphone, and say, “Wrong!” It is possible that without an electoral college, Hillary could have won, but the whole game would be so incredibly different that it is impossible to say. In fact, an election without an electoral college would be, if you can believe it, far worse than what we have today. Look at the map (above, from 2012) that shows results by district across the United States. There are huge swaths of red and dense areas of deep blue. The country is divided by geography: the cities, the West Coast, and the Northeast consistently and reliably vote Democrat. Right now, the campaigns are focused on relatively few swing states, like Florida, Ohio, and Pennsylvania, which have a healthy mix of demographics, industries and living situations rare in today’s horribly divided country. If the vote were simply majority rule, these would be the very areas the campaigns avoided. The concept of a “swing state” would disappear. The election would turn into a “get-out-the-vote” operation focused on each side’s base. There would be no need to try to win voters over. In this imaginary universe, Trump would spend most of his time in the center of the country and in rural areas of large population states, like California. Hillary would stay only in coastal urban areas. The alternate realities that already exist in media would metastasize as there would be no need to check each other’s arguments. There would only be a need to gin up various fears and conspiracies. It is hard to say who would have had better turnout last Tuesday in the imaginary universe, but the red areas typically have relatively higher turnout (with some caveats). So, it seems, again, that our Founding Fathers were wise. The Electoral College forces the candidates into diverse areas, creating dialogue and making the candidates persuade voters on the issues. Keep it.  It is an unacknowledged cliché that “innovation” needs to happen in education. Reformers issue soliloquies condemning the outdated “factory model of education” and the overdue need for “new thinking.” However, attempts to kindle innovation in education are not new. And, ironically, they all seem to have a tendency to suppress innovation. Vouchers have been around for decades. They were, and are, touted as a way to introduce market forces (and therefore, innovation) and to smash sclerotic bureaucracies (which supposedly suppress innovation). The remarkable thing about all of the experiments with vouchers is their unremarkable results: neither disasters nor miracles. And, most of all, the parents, when given a choice, opt for traditional-style schools. Charters are a bit more recent and have the same goal: free school leaders from the iron chains of the bureaucracies and they will experiment and innovate. The problem has been that the charter approval process is so afraid of corruption and failure that it has become very conservative: witness all of the “no excuses” charters, which are mimeographs of 1950’s-era Catholic schools. “Edtech” is the venture capital term for “education technology.” (Sadly, this seems limited to computers and the Internet, leaving out intriguing possibilities.) The hope, and hype, is that Silicon Valley entrepreneurs will, of course, innovate; a slurry of products and apps have hit the market in the past decade. Unfortunately, most do not take off. The products that do are not very innovative; a huge number of apps target Pre-algebra and Algebra I, which occupy a unique nexus: cooperative students and a simple subject. Outside of this sweet spot, there are fewer successes: most are small bore, like quiz-making apps, online portfolios, or web-based flashcards. One thing all of these reforms have in common, below the surface, is a hostility towards teachers. Edtech promoters have been talking for a decade about how the Khan Academy, or MOOCs, would do away with traditional teachers and tutors. Charter and voucher proponents have antagonized teacher unions. The charters that get results get them by working teachers until they quit. Outside of half-baked and quickly abandoned merit pay ideas, the education reform movement has done nothing to improve, or even change, the job description of a teacher. Some may argue that there have been changes to recruitment, training, and professional development. Perhaps, but to repeat: there has been almost no change to the basic job description of a teacher. There appears to be an historic consensus. Just look at the support for education reform: massive public backing, intense study from academics, unprecedented interest from the private sector, gobs of money from charity, and rare bipartisan political agreement, and yet, no innovation at the very level that education actually takes place. Why are the ed reformers so blind? Who will educate them?  Tom Wolfe, founder of “the New Journalism,” wearer of white suits, and one of the greatest living writers, has written a new nonfiction book, The Kingdom of Speech, attacking two secular saints: Charles Darwin and Noam Chomsky. The book is massively entertaining and informative – and a bit flawed – but full of his trademark verve: exclamation points and Bango! neologisms. Wolfe, although a perfect gentleman in every interview, loves attacking and mocking in his books. His skewering of the art world in “The Painted Word” is legendary. His long piece, “Radical Chic,” on the Black Panthers attending a wealthy New York party thrown by Leonard Bernstein, is one of the great essays written in the English language. I read this new work in one sitting. First, the major flaw: he muddies the water around the concept of “evolution.” Wolfe has a problem not so much with evolution, but with one of its mechanisms, natural selection, particularly as it is applied to human evolution, especially the evolution of language. He points out the most of stories around how language evolved (imitating birds), are not any better than Kipling’s “Just So” stories. He revives the reputation of Alfred Russel Wallace, who wrote up his theory of evolution before Darwin, whom Wolfe refers to as “Charlie Darwin” and portrays as an upper class, cheating, spoiled brat. Hilarious! He spends the second half of the book attacking Noam Chomsky. Chomsky, in this portrait, starts out not only revolutionizing linguistics, but also all social sciences and the role of intellectuals in American culture. He has a meteoric rise that puts him alongside the great minds of history. The only problem is that his theory of a Universal Grammar Device is wrong. Daniel Everett, Chomsky’s rival, has found a tribe in the Amazon with few of the features of language asserted by Chomsky. Wolfe paints Everett in a similar light to Wallace: a hardy adventurer outside the mainstream. What was shocking, if true, are the stories about Chomsky and his acolytes shunning Everett and his supporters. One philosopher, David Papineau, has confirmed on Twitter that he was hounded by the “Chomsky thought police” after writing a positive review of Everett. Wow! Wolfe asserts that speech and language (and grammar) are not encoded in the brain as Chomsky has built his career asserting, but are artifacts, like “a Buick or lightbulb.” By “artifact,” he seems to mean “tool.” Language is a tool that creates memory and therefore planning, culture, and learning. Everything we have is a result of this tool. If Wolfe exchanged the word “artifact” for “technology,” there could be room for agreement between the two old legends. There has been a bit of formal academic work around the idea that humans coevolved with their technology. We start hunting with spears, for example, and the shoulder (and brain) evolved to optimize the skill of spear throwing. (And then we developed better spears, requiring better shoulders and bigger brains, which then improved the weapons, and on and on…) Language is just another piece of technology, requiring increasing skill to use, and with increasing payoffs for each improvement, creating a positive feedback loop. A tantalizing piece of evidence supports this theory: the evolution of handedness. We seem to have become (mostly) right-handed (more precise, more accurate, more skilled) at around the same time we developed speech (and big-game hunting and art). Perhaps there is not a Universal “Grammar” Device, but something broader, something to help master all human technology. Perhaps a Universal Skill Device, with “skill” defined as the ability to effectively use technology. This skill device would encompass more than just the linguistic, including also the athletic and artistic. Wolfe's "Kingdom of Speech" lies within a broader "Empire of Skill." It is interesting to note that the ancient Greek and Roman philosophers, who thought it essential that all their pupils learn athletics, art, and languages, would agree.  A recent study by Nicholas Papageorge demonstrated that certain bad behaviors in school are associated with a range of positive outcomes in life. To policymakers and schools, who recently have become obsessed with “noncognitive” outcomes in education (like grit), this result is devastating. Not only are schools ignoring traits that lead to success, they are suppressing them. Papageorge’s insight was to separate bad behavior into two camps: externalizing and internalizing. The kids with externalizing bad behaviors, like aggression, ended up more successful in life. There is theoretical justification to this split between externalizing and internalizing (depression, anxiety) behaviors, but it is not in the DSM. In fact, personality psychology, from which education researchers draw their ideas about these “non-cognitive” outcomes, has a complicated history with new perspectives gaining credence all the time. Researchers currently draw on the “five-factor” model, which has distilled personality into the OCEAN traits (Openness, Conscientiousness, Extraversion, Agreeableness, and Neuroticism). There is also a considerable amount of interest in Angela Duckworth’s research on “grit.” Both of these models have received a strong amount of criticism. Recent studies show that “grit” is a mere rebranding of “conscientiousness.” Critics of the five-factor model point out that the five traits are largely heritable and stable over the course of a person’s life, making changing them a silly goal for schools. Other personality researchers connect temperaments to neurotransmitters and hormones. Dr. Helen Fisher’s work in this area is enlightening; she asserts that people have four main temperament dimensions (dopamine, serotonin, testosterone, and estrogen/oxytocin). The externalizing behaviors that are punished in school but rewarded in life correspond to the temperaments associated with testosterone and dopamine. The testosterone dimension includes such bad behaviors as rage and bullying, but also helpful ones like intensified focus and social dominance. The dopamine dimension includes bad behaviors like a lack of inhibition, but also curiosity, creativity, and energy. Other personality researchers take an evolutionary focus. There has been recent focus on the effect of a person’s “disgust sensitivity” on his overall personality. People with a heightened sense of disgust are cleaner and adhere closely to rules. They are also more homophobic and racist, as they are literally disgusted by things that are different than they are. Two main problems with the recent obsession with “noncognitive” outcomes present themselves. Lurking variables will hurt any study done that draws on half-baked theories. Are the researchers measuring grit and conscientiousness, or are they measuring a heightened sense of disgust and a lack of curiosity and social dominance? The second problem is more devastating. Helen Fisher draws a distinction between temperament and character. Temperaments are the emotional styles that come from your hard wiring. These may be somewhat malleable, but only within a range. Character, on the other hand, is the result of what you do with your experiences. Much of the recent writing on character and education does not draw this distinction. An analogous situation would be an economist studying health outcomes and telling doctors to focus on (adult) height, because they found a strong correlation between height and health. Wouldn’t that be ridiculous? (To hear more about a less ridiculous take on character and education, click here.) |

AuthorI'm an entrepreneur and I teach math, history, economics, and fitness. I'm looking for arguments. Archives

November 2019

|

RSS Feed

RSS Feed